AI/ML/GenAI on AWS Workshop

⚠️ Note: The information below is for reference purposes only. Please do not copy it verbatim into your report, including this warning.

Summary Report: “AI/ML/GenAI on AWS Workshop”

Event Objectives

- Understand the AI/ML landscape and AWS services ecosystem in Vietnam

- Learn end-to-end machine learning with Amazon SageMaker

- Explore Generative AI capabilities with Amazon Bedrock

- Master prompt engineering and RAG (Retrieval-Augmented Generation) techniques

- Build practical AI/ML solutions using AWS services

Event Details

- Location: AWS Vietnam Office

- Date & Time: 8:30 AM – 12:00 PM, Saturday, November 15, 2025

Speakers & Coordinators

Instructors:

- Lâm Tuấn Kiệt – Senior DevOps Engineer, FPT Software – Amazon SageMaker and ML Services Overview

- Đinh Lê Hoàng Anh – Cloud Engineer Trainee, FCAJ Swinburne University of Technology – Amazon Bedrock and AWS AI/ML Services

- Danh Hoàng Hiếu Nghị – Fresher AI Engineer, Renova Cloud – Amazon Bedrock Agent Core, live demonstrations and hands-on guidance

Coordinators:

- AWS Vietnam Community Team

- FCJ Program Leaders

Event Agenda

8:30 – 9:00 AM: Welcome & Introduction

- Participant registration and networking

- Workshop overview and learning objectives

- Ice-breaker activity

- Overview of the AI/ML landscape in Vietnam

9:00 – 10:30 AM: AWS AI/ML Services Overview

Amazon SageMaker – End-to-end ML Platform

Data Preparation and Labeling:

- SageMaker Data Wrangler for data preprocessing

- Ground Truth for data labeling and annotation

- Feature Store for feature management and reuse

Model Training, Tuning, and Deployment:

- Built-in algorithms and custom training scripts

- Hyperparameter tuning with automatic model optimization

- Model deployment options: real-time, batch, and serverless inference

- A/B testing and multi-model endpoints

Integrated MLOps Capabilities:

- SageMaker Pipelines for ML workflow automation

- Model Registry for version control and governance

- Model Monitor for detecting data drift and model quality

- Integration with CI/CD tools for continuous deployment

Live Demo: SageMaker Studio walkthrough

- Creating a notebook instance

- Training a machine learning model

- Deploying and testing the model endpoint

10:30 – 10:45 AM: Coffee Break

- Networking and refreshments

- Q&A with AWS experts

10:45 AM – 12:00 PM: Generative AI with Amazon Bedrock and AWS AI/ML Services

AWS AI/ML Services Overview

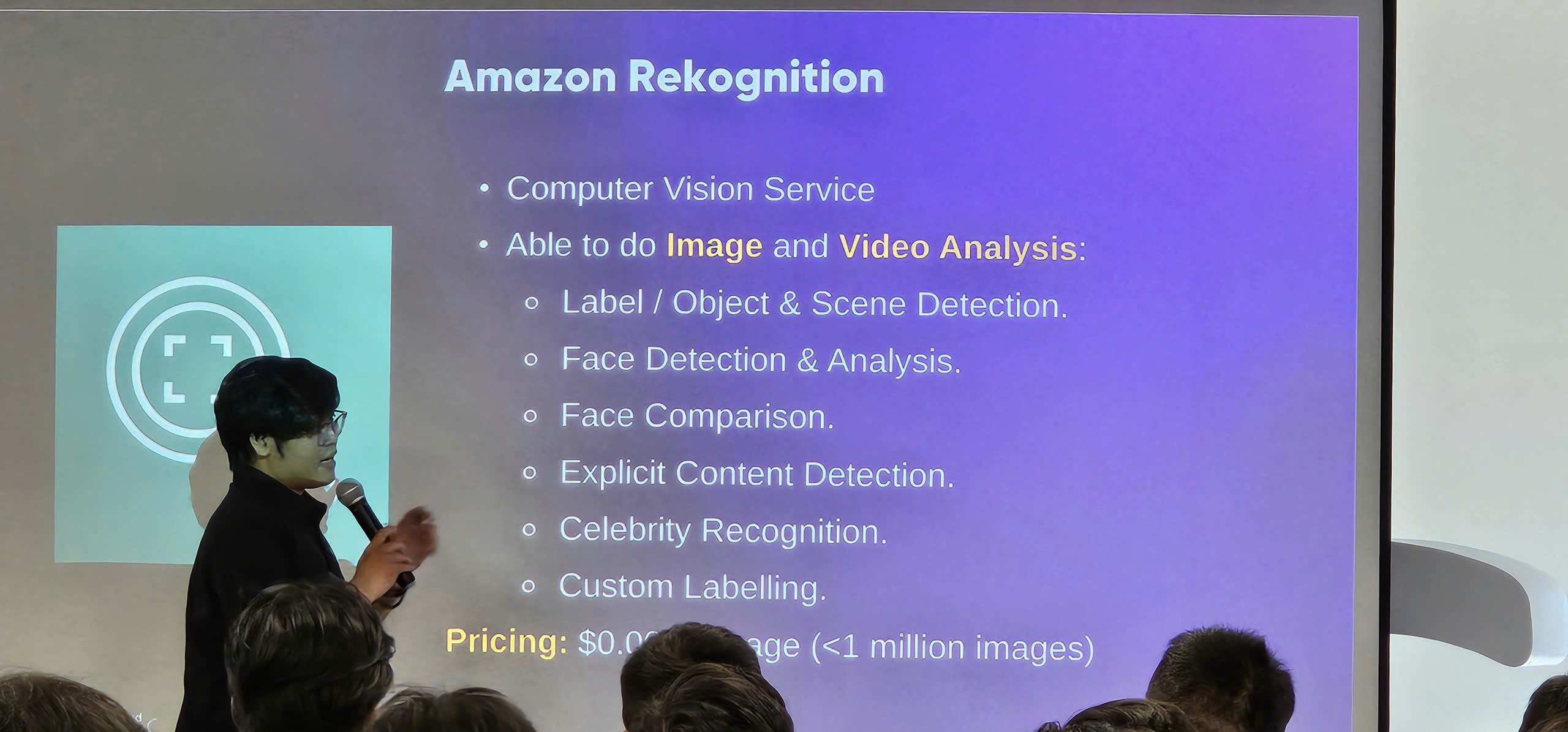

- Amazon Rekognition: Computer vision for image and video analysis

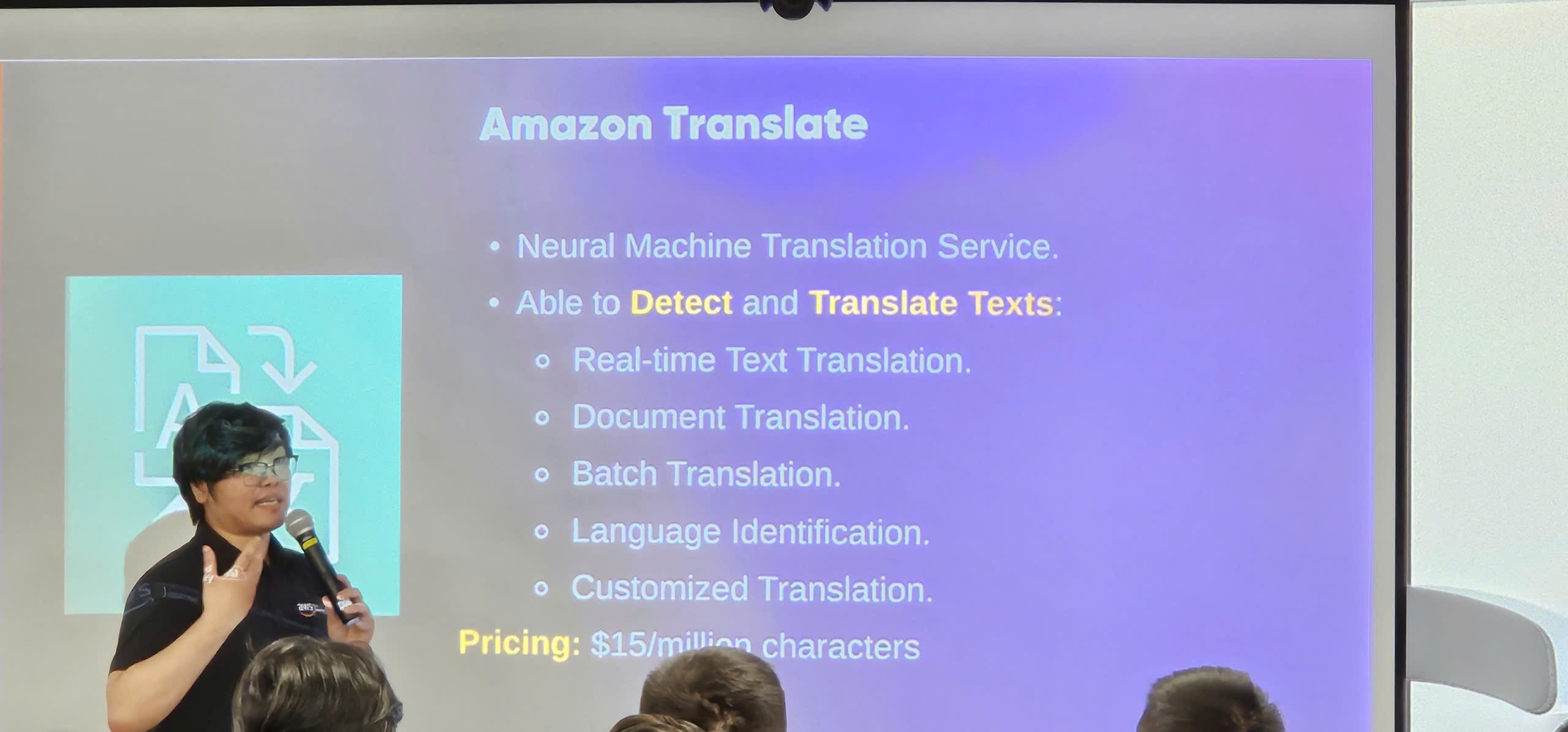

- Amazon Translate: Automatic text translation with neural machine translation

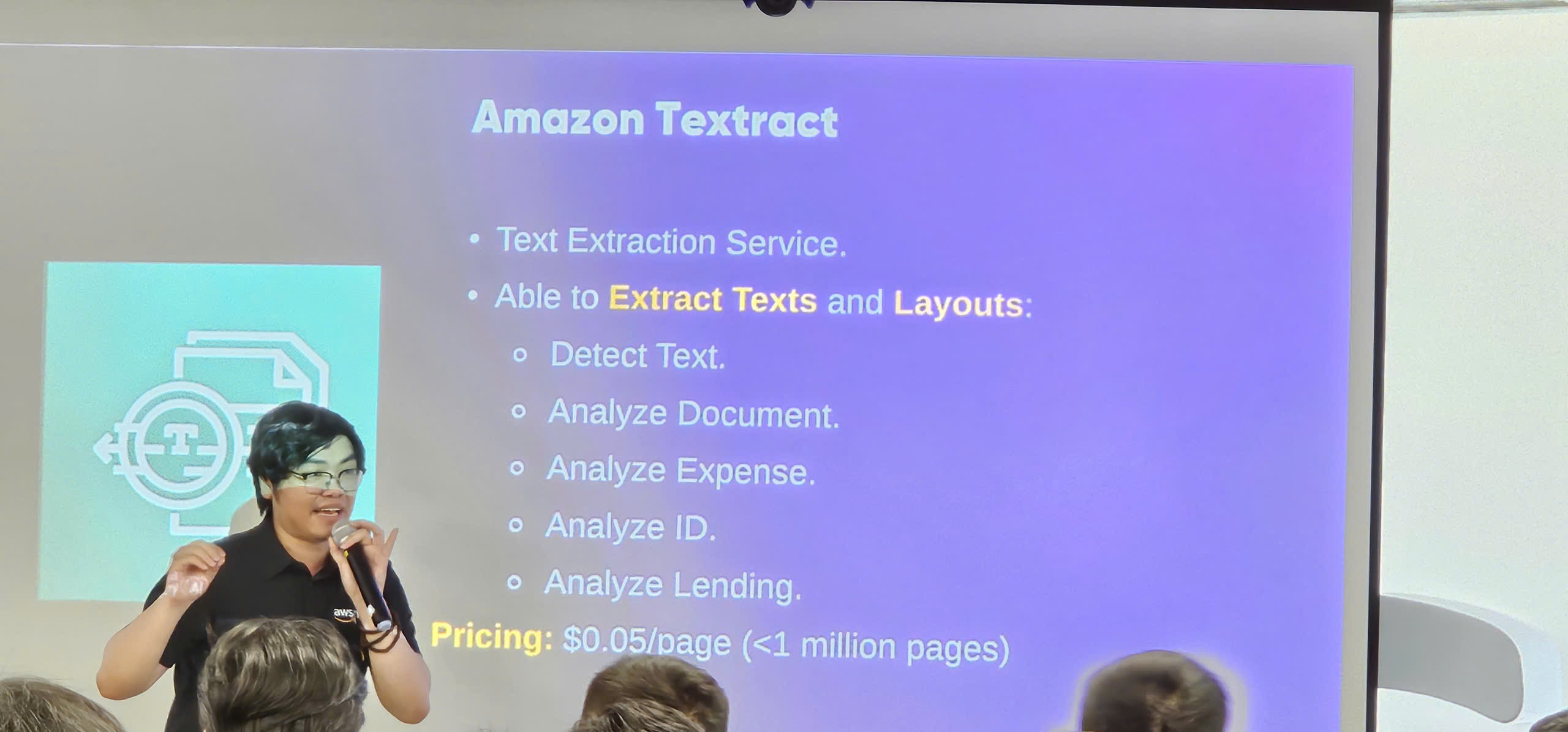

- Amazon Textract: Extract text and data from documents

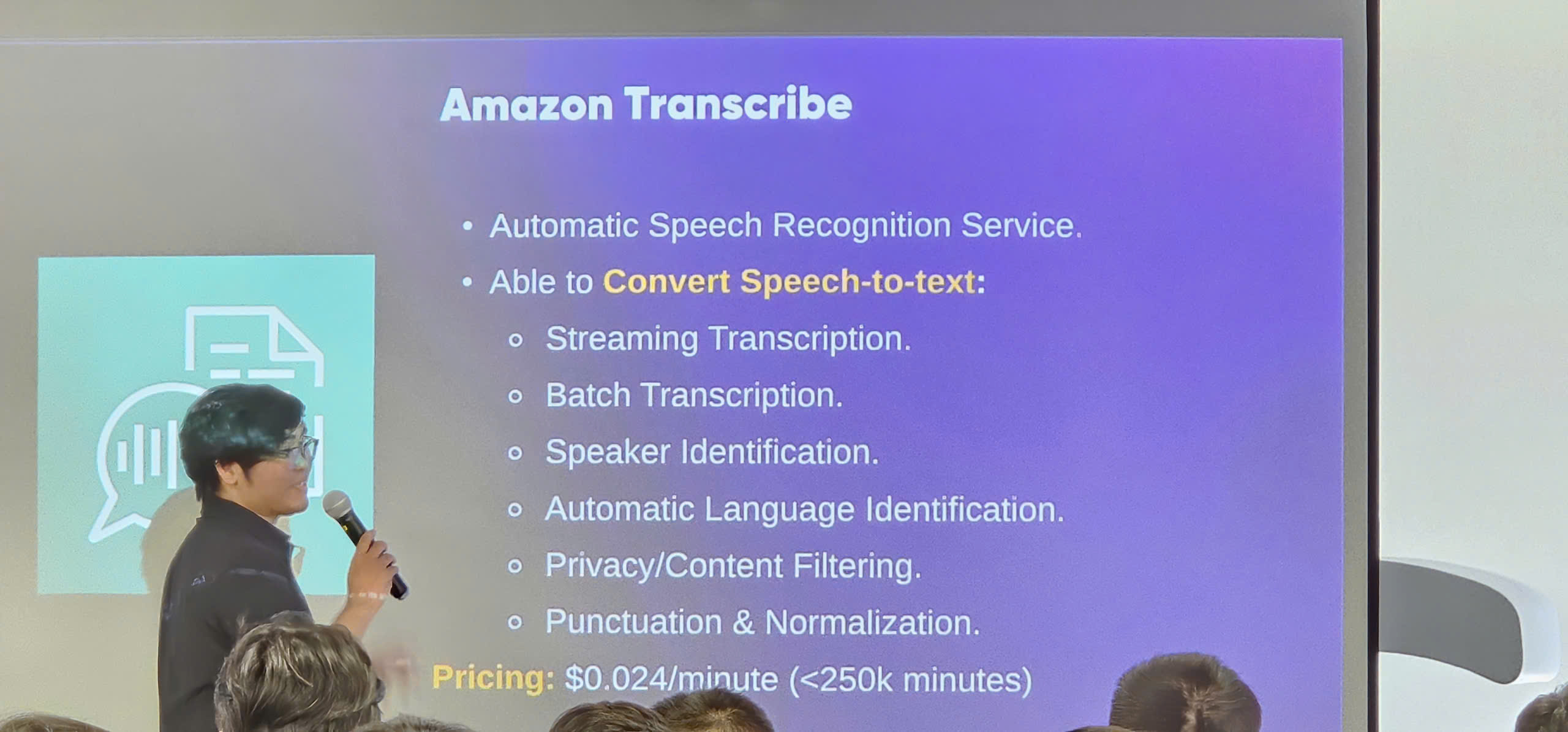

- Amazon Transcribe: Automatic speech-to-text conversion

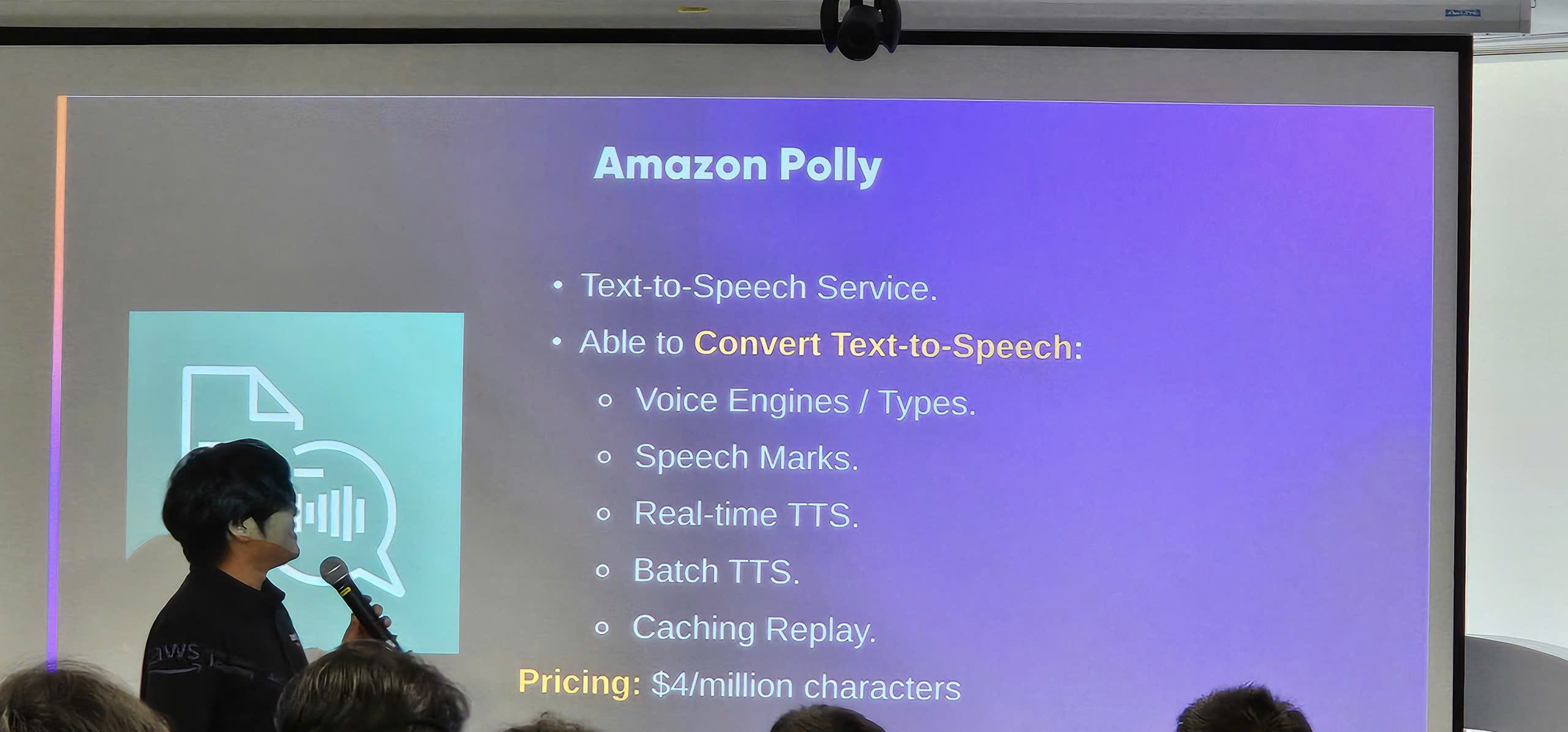

- Amazon Polly: Text-to-speech with natural-sounding voices

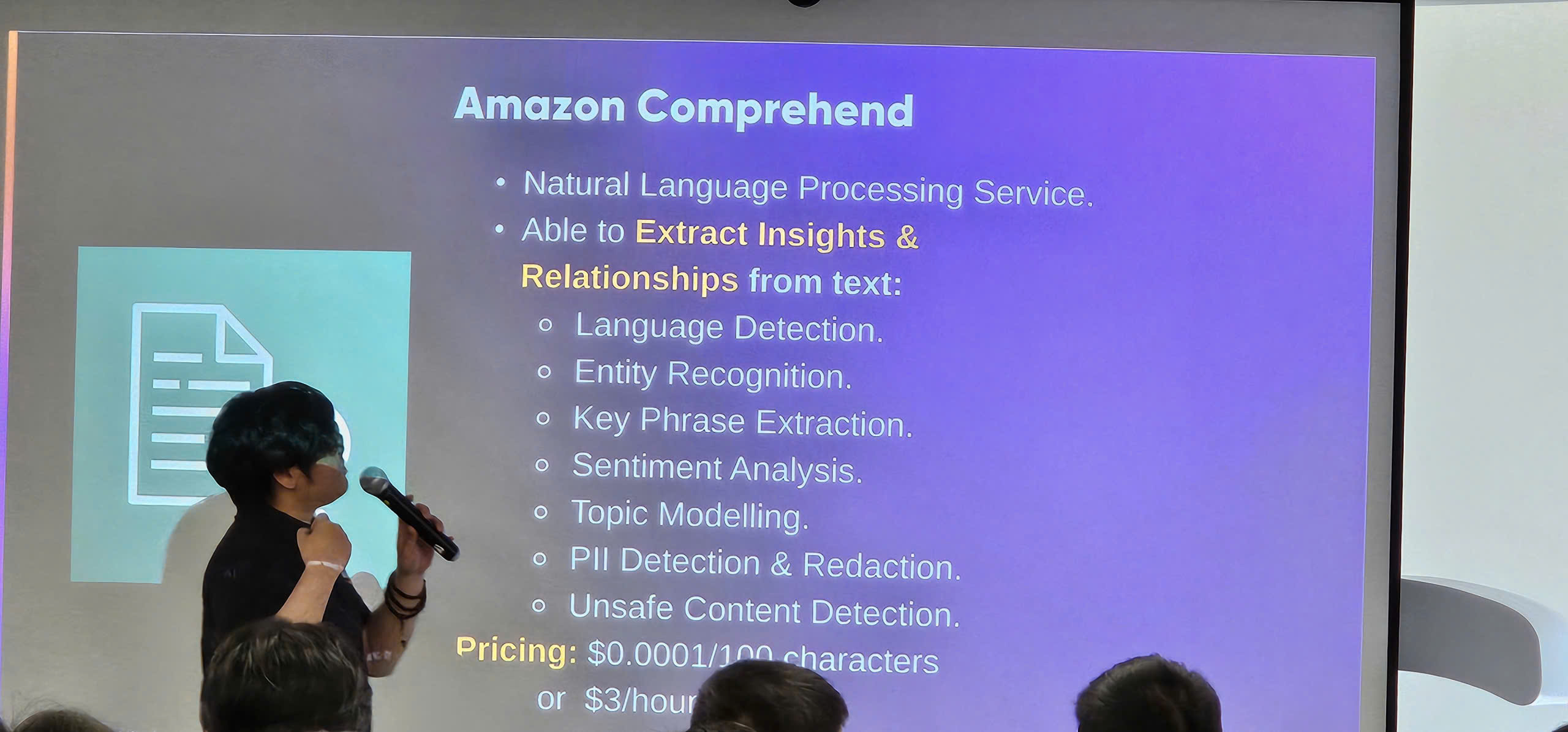

- Amazon Comprehend: Natural language processing and text analytics

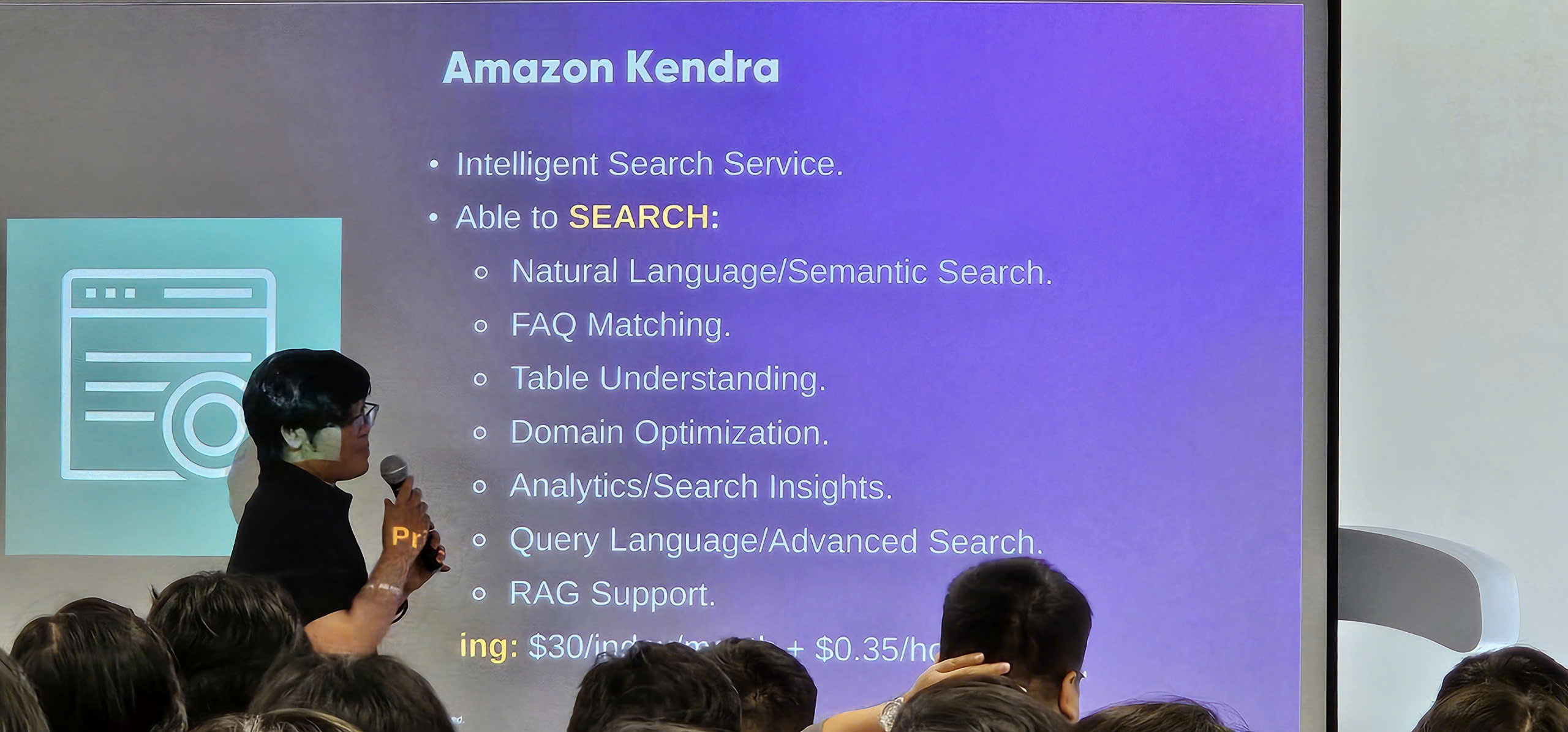

- Amazon Kendra: Intelligent search service powered by ML

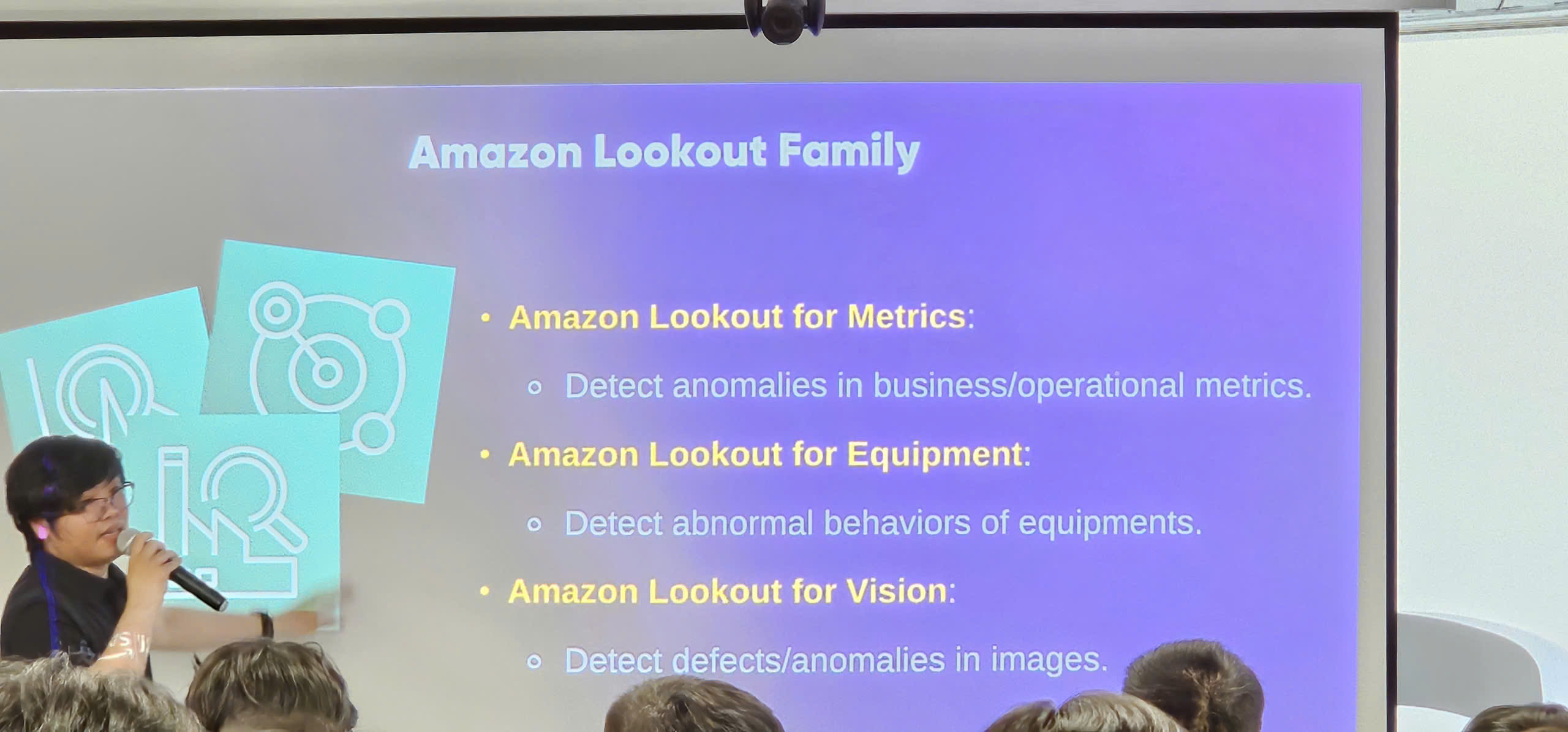

- Amazon Lookout: Anomaly detection for operational data

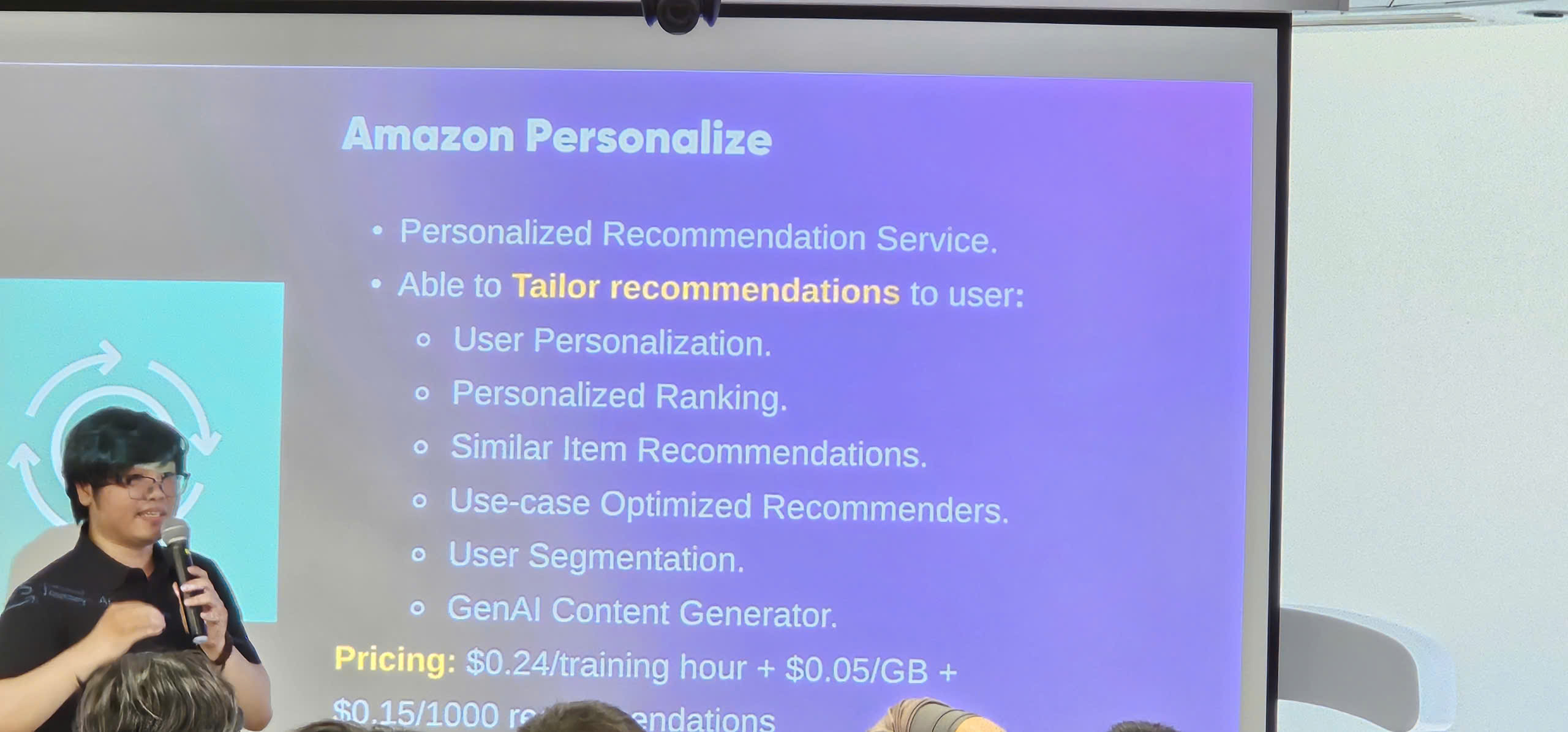

- Amazon Personalize: ML-powered recommendation engine

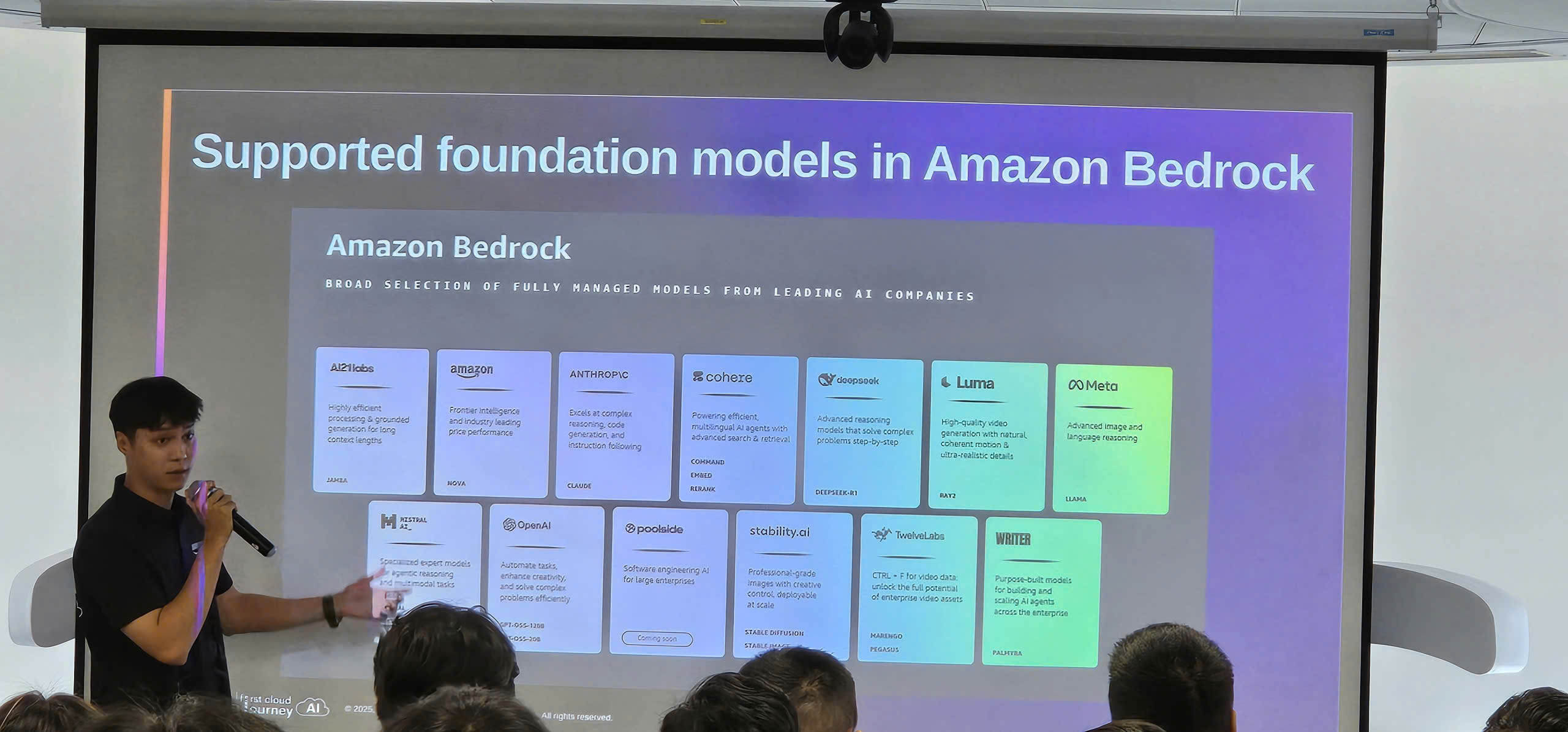

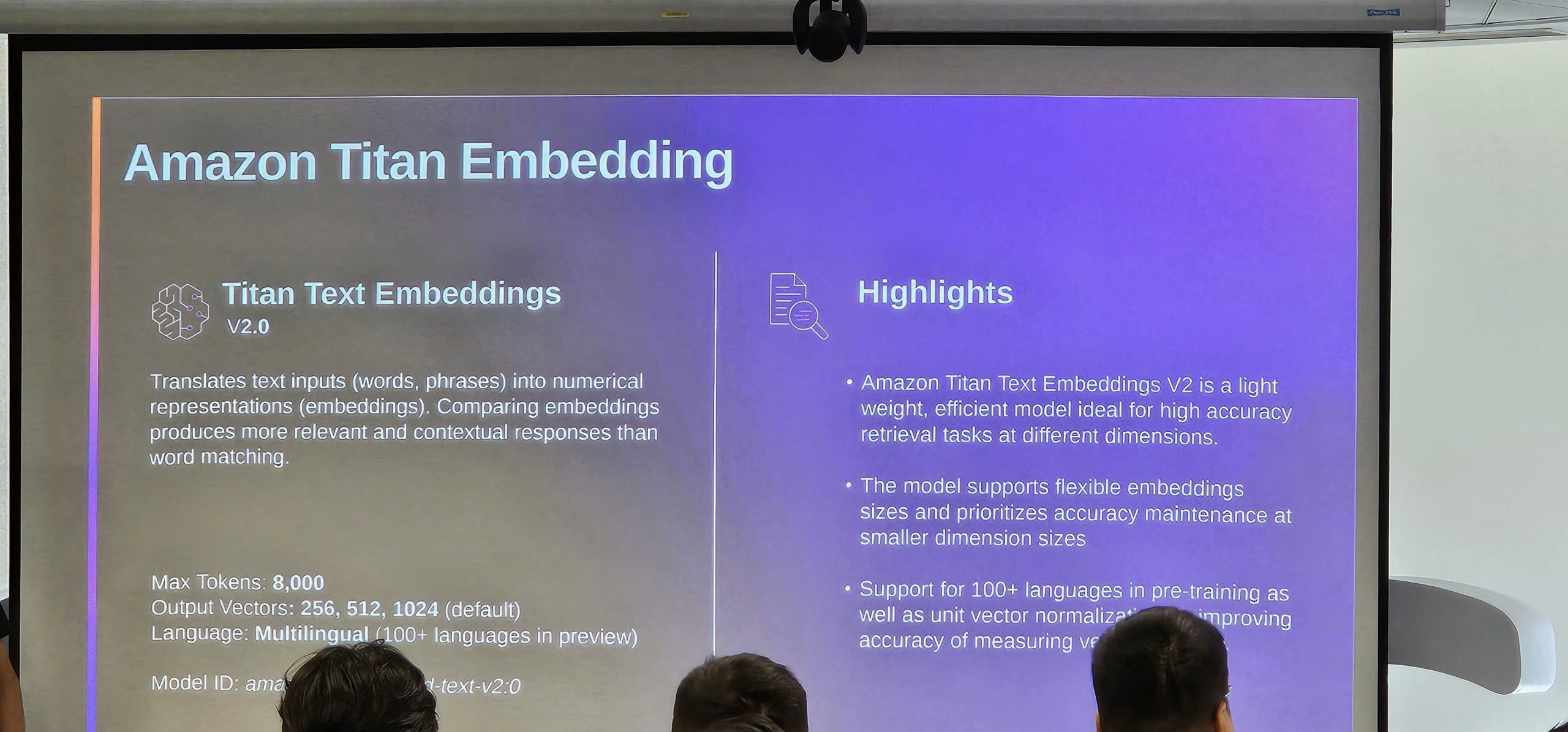

Foundation Models: Claude, Llama, Titan

- Model Comparison & Selection Guide:

- Claude (Anthropic): Best for conversational AI and complex reasoning

- Llama (Meta): Open-source flexibility and customization

- Titan (Amazon): Cost-effective and AWS-native integration

- Choosing the right model for your use case

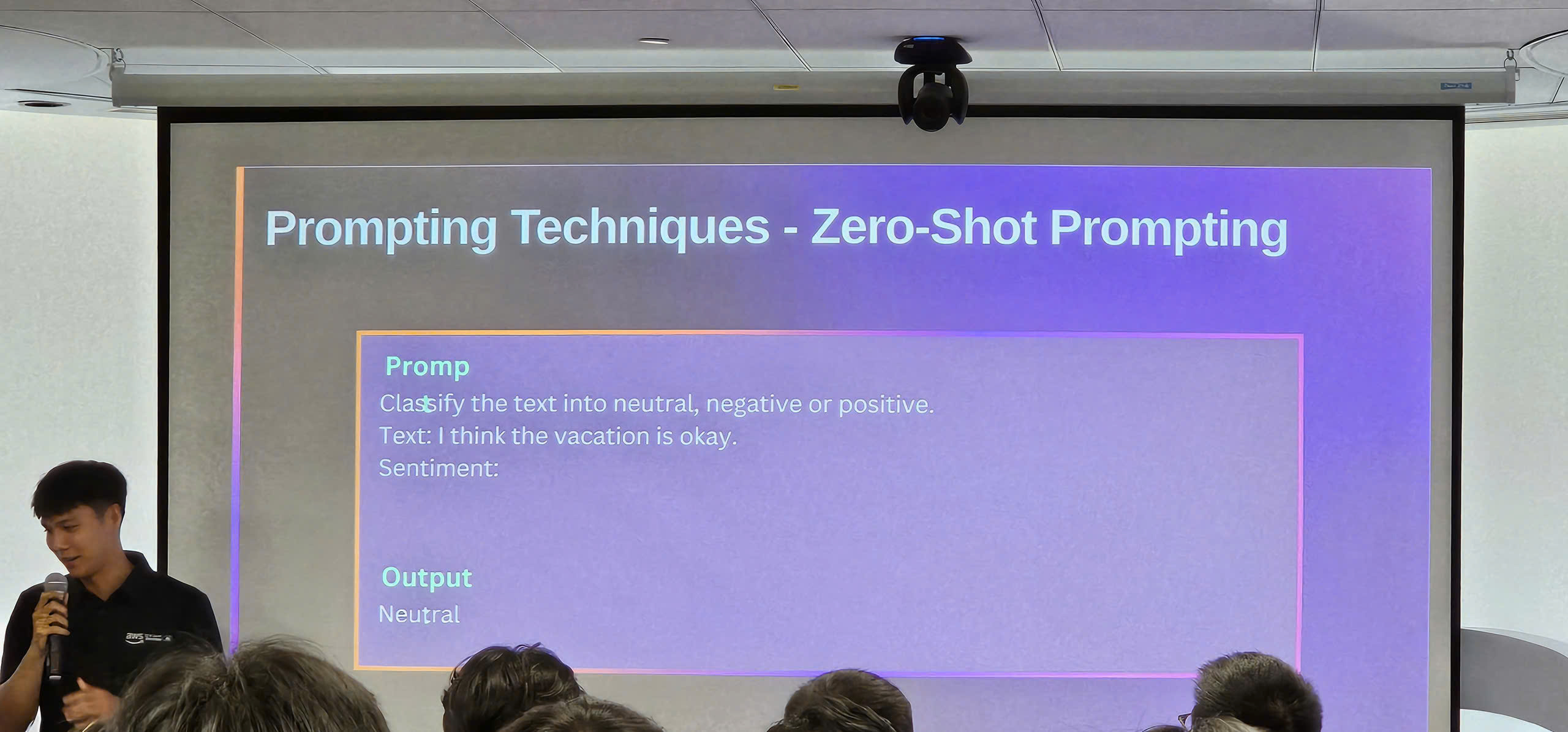

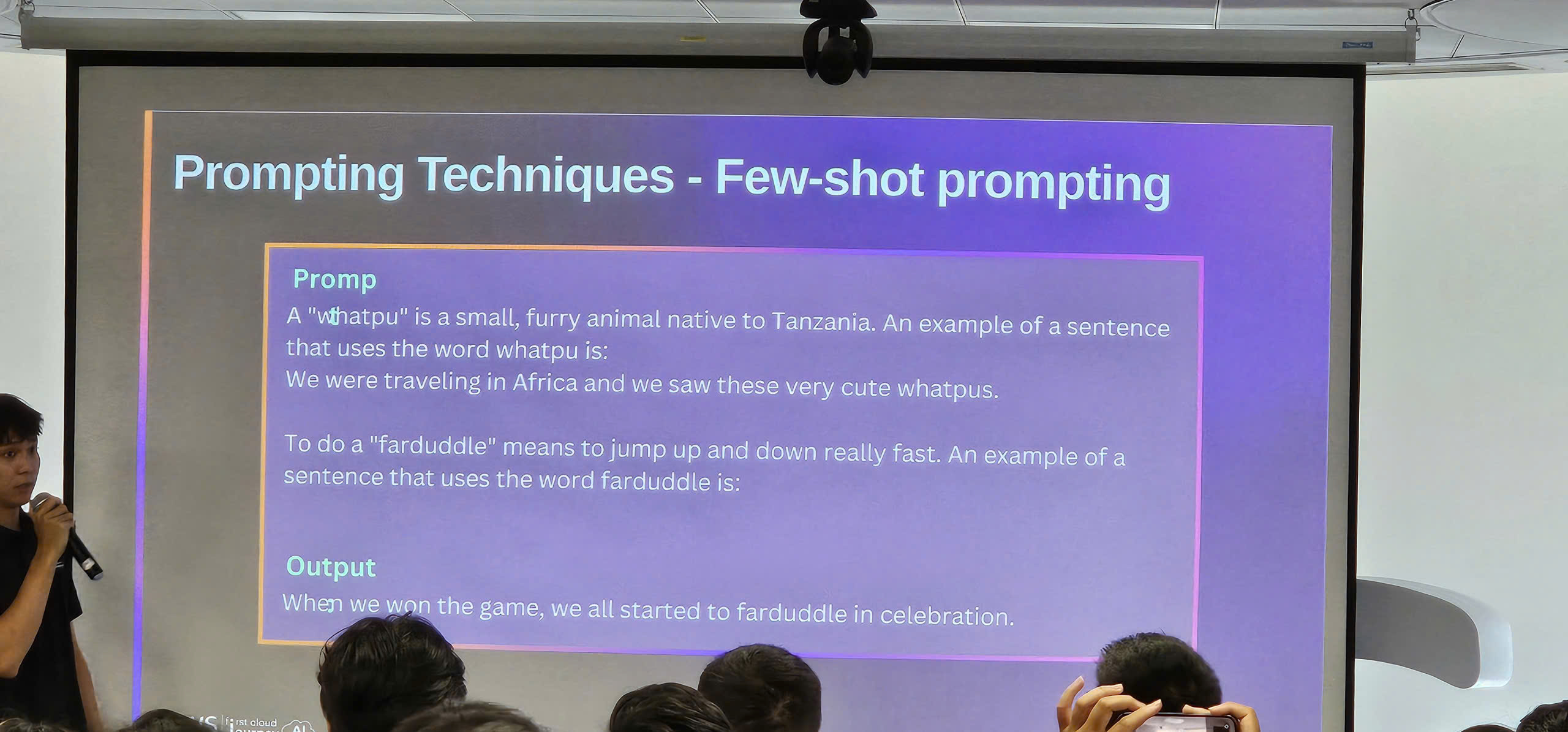

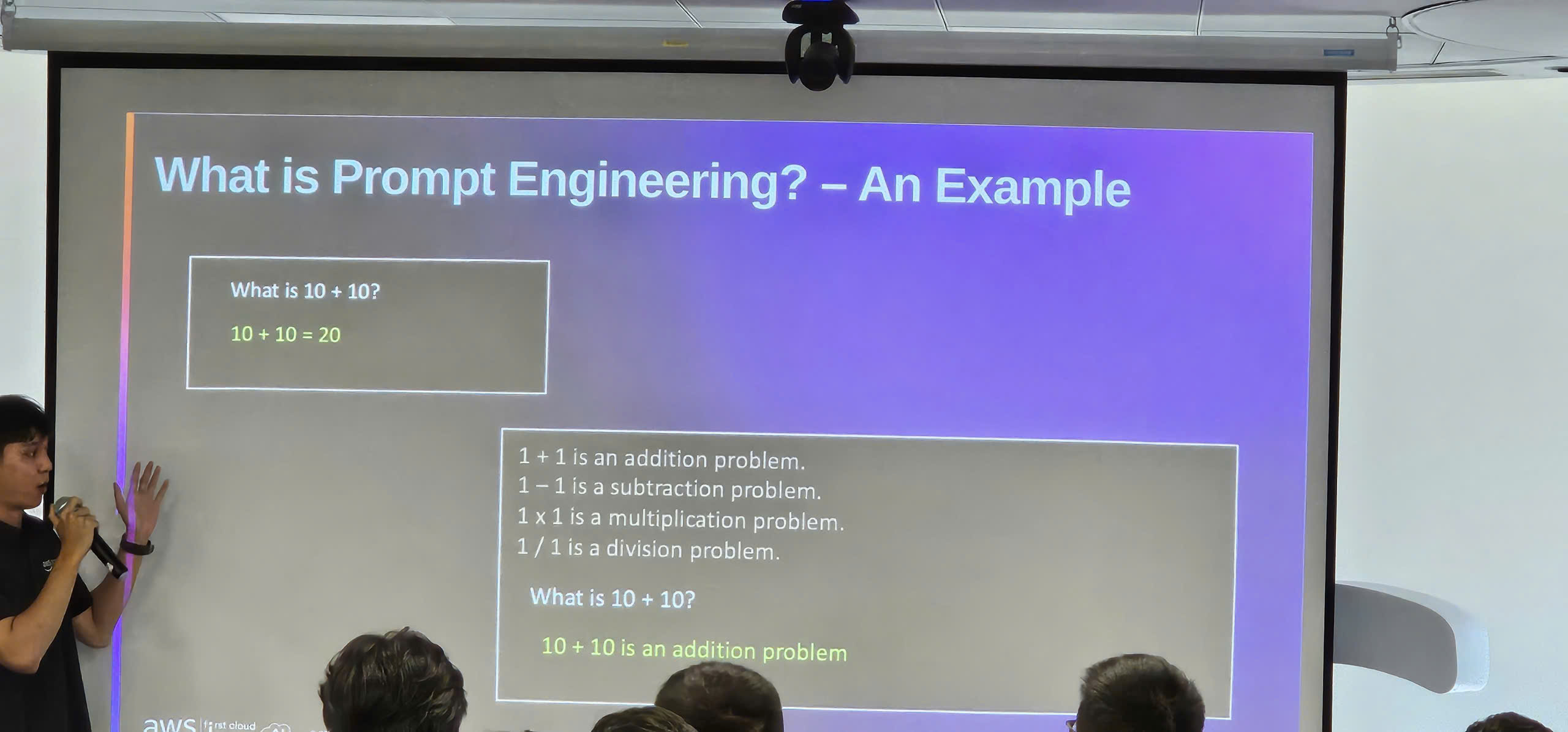

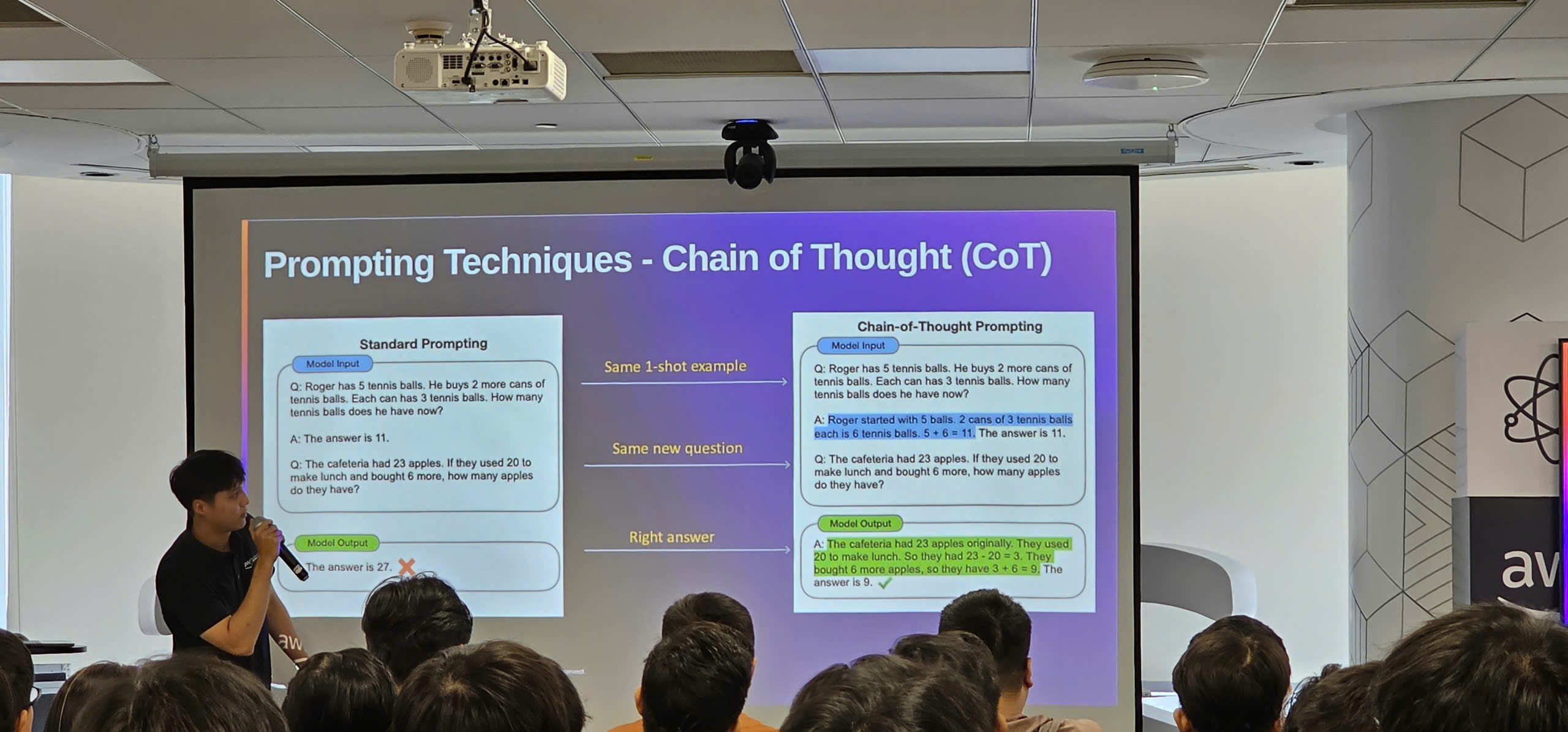

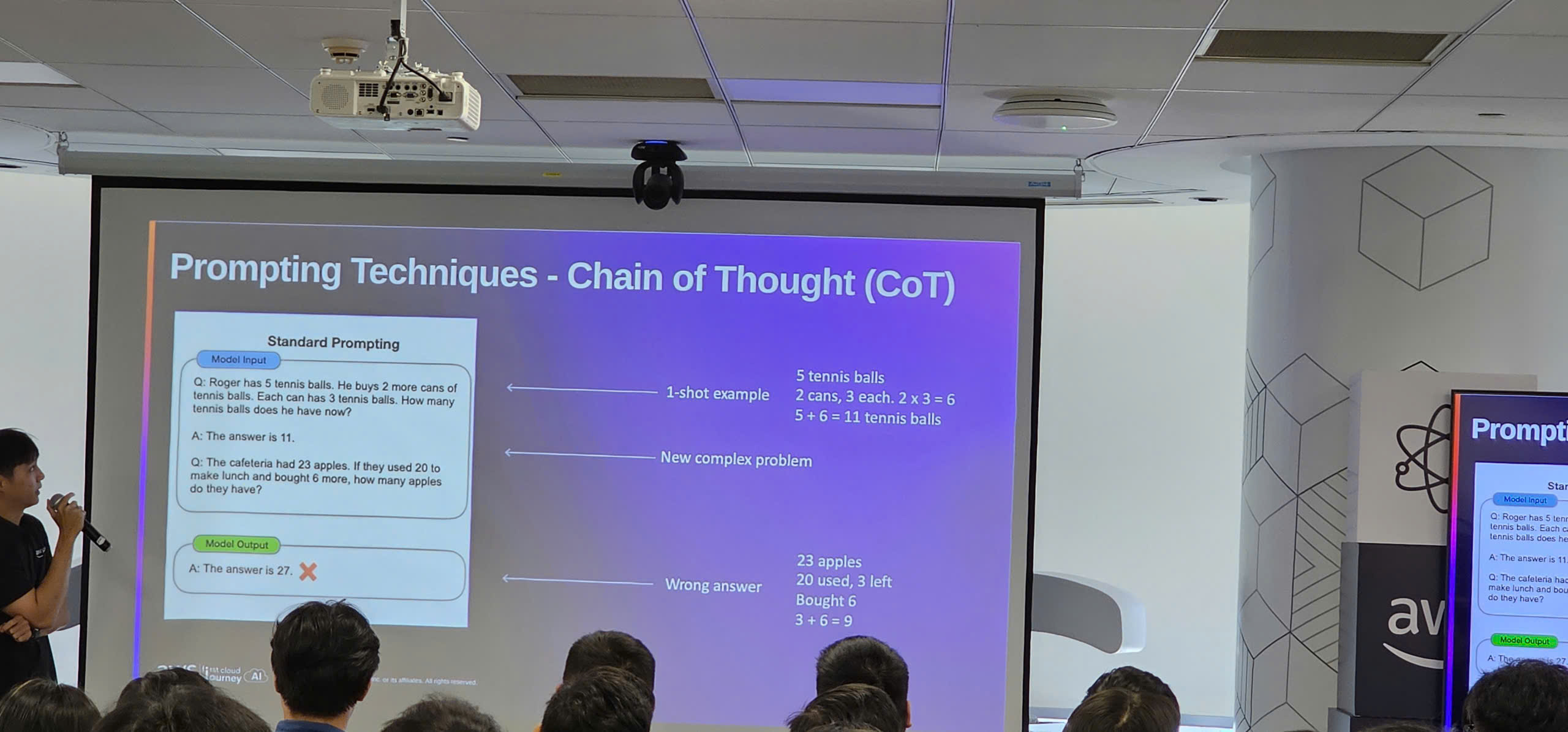

Prompt Engineering Techniques

- Effective Prompting Strategies:

- Clear instructions and context setting

- Few-shot learning with examples

- Chain-of-Thought (CoT) reasoning for complex tasks

- Role-based prompting and persona definition

- Advanced Techniques:

- Temperature and token control

- System prompts vs user prompts

- Prompt templates and reusability

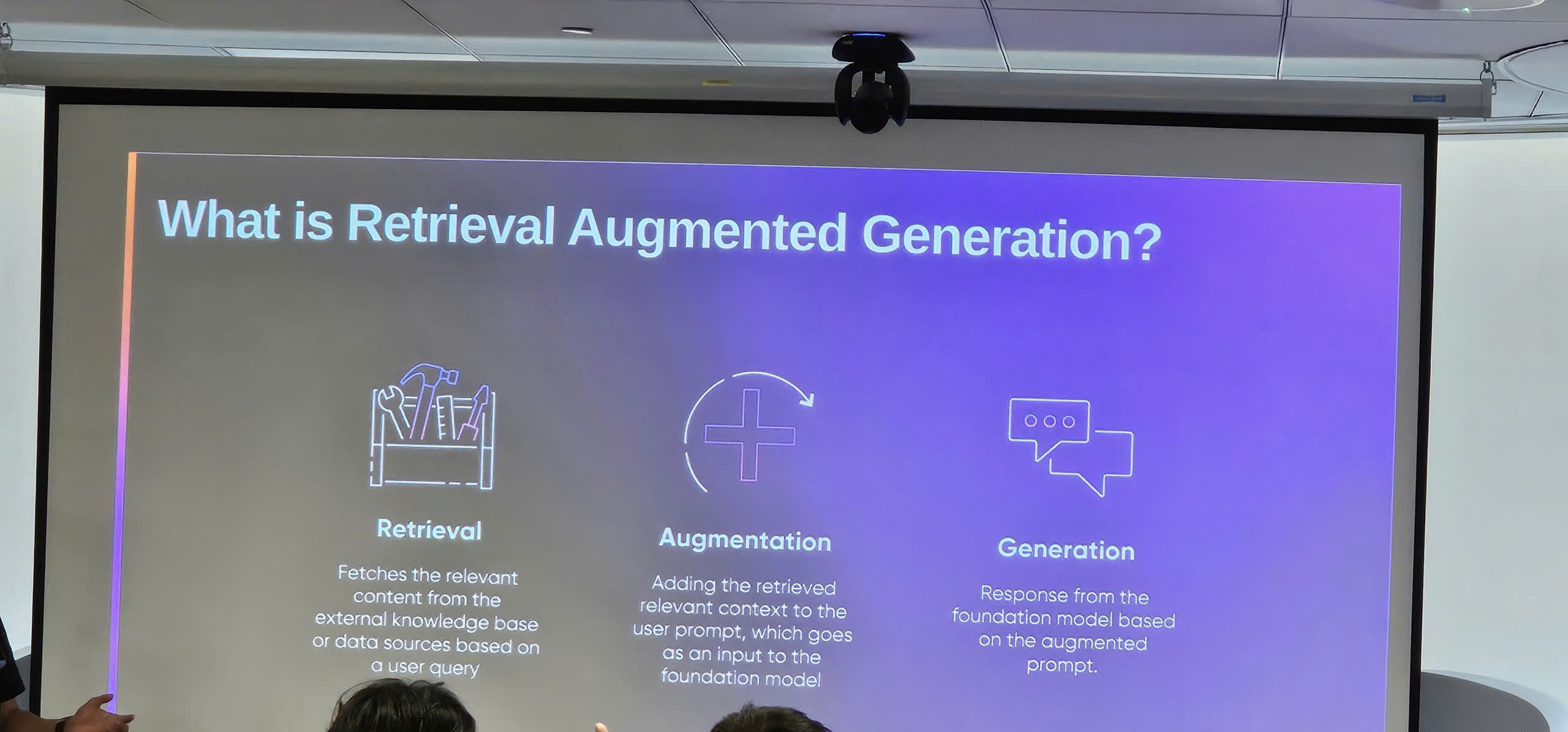

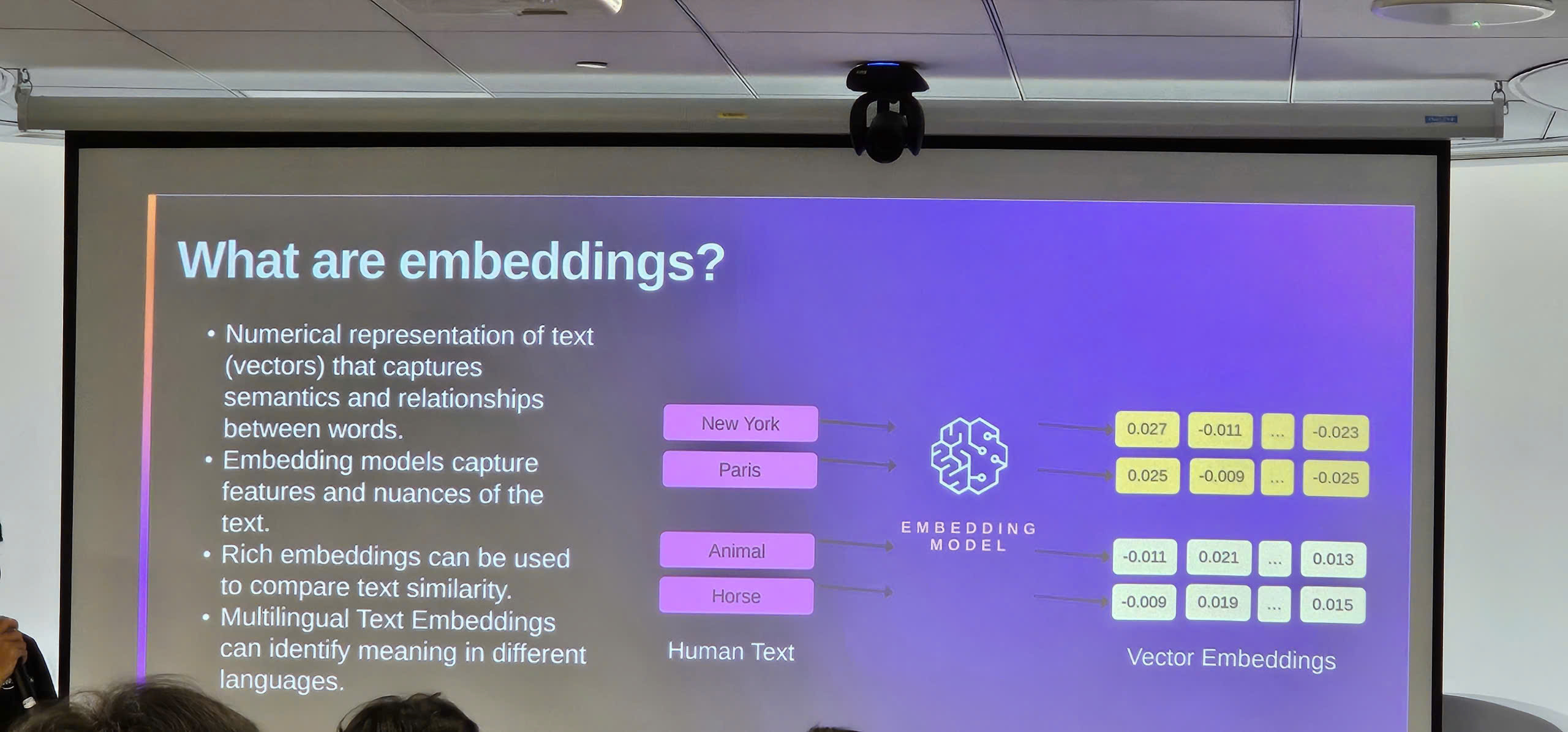

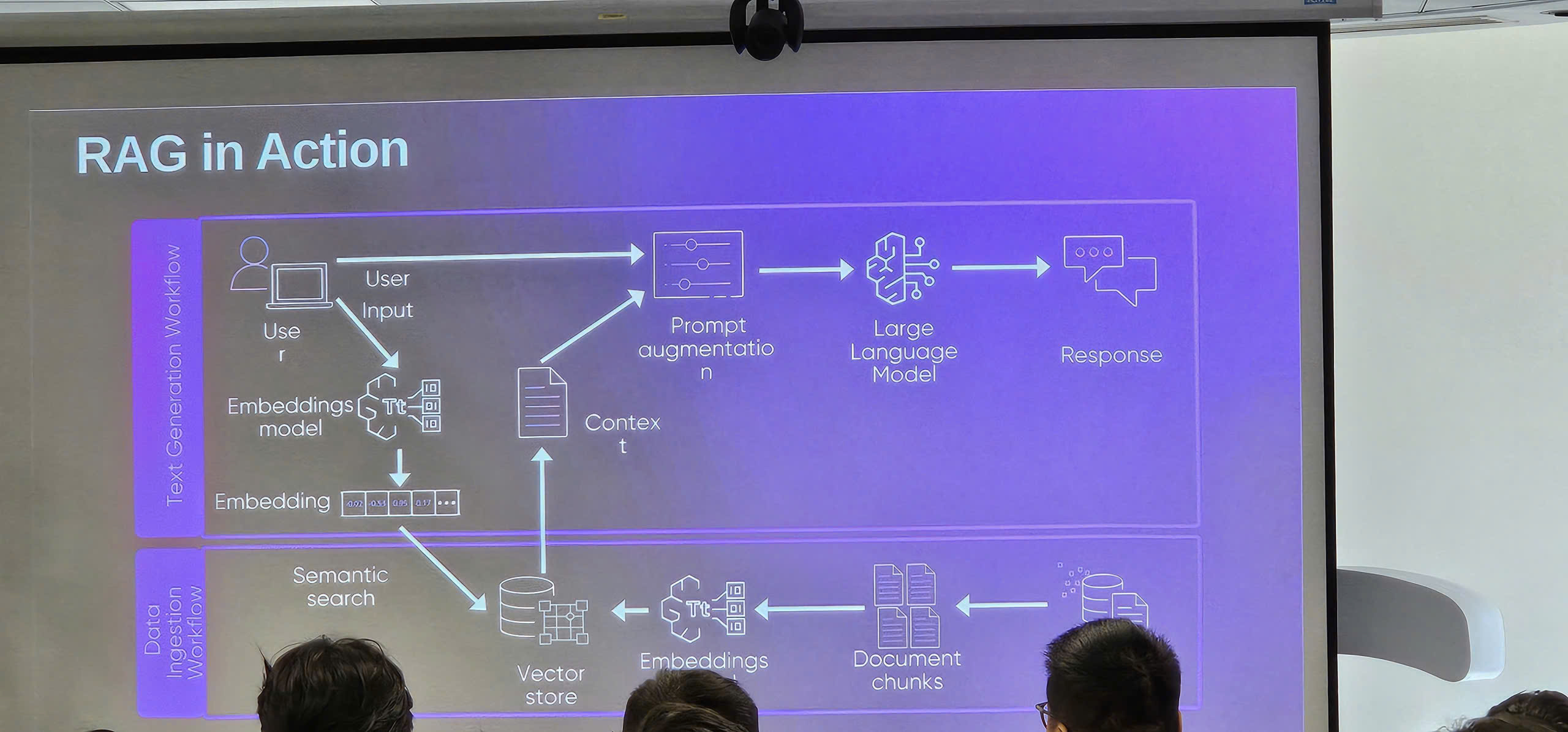

Retrieval-Augmented Generation (RAG)

- RAG Architecture:

- Vector databases and embeddings

- Semantic search and document retrieval

- Context injection into prompts

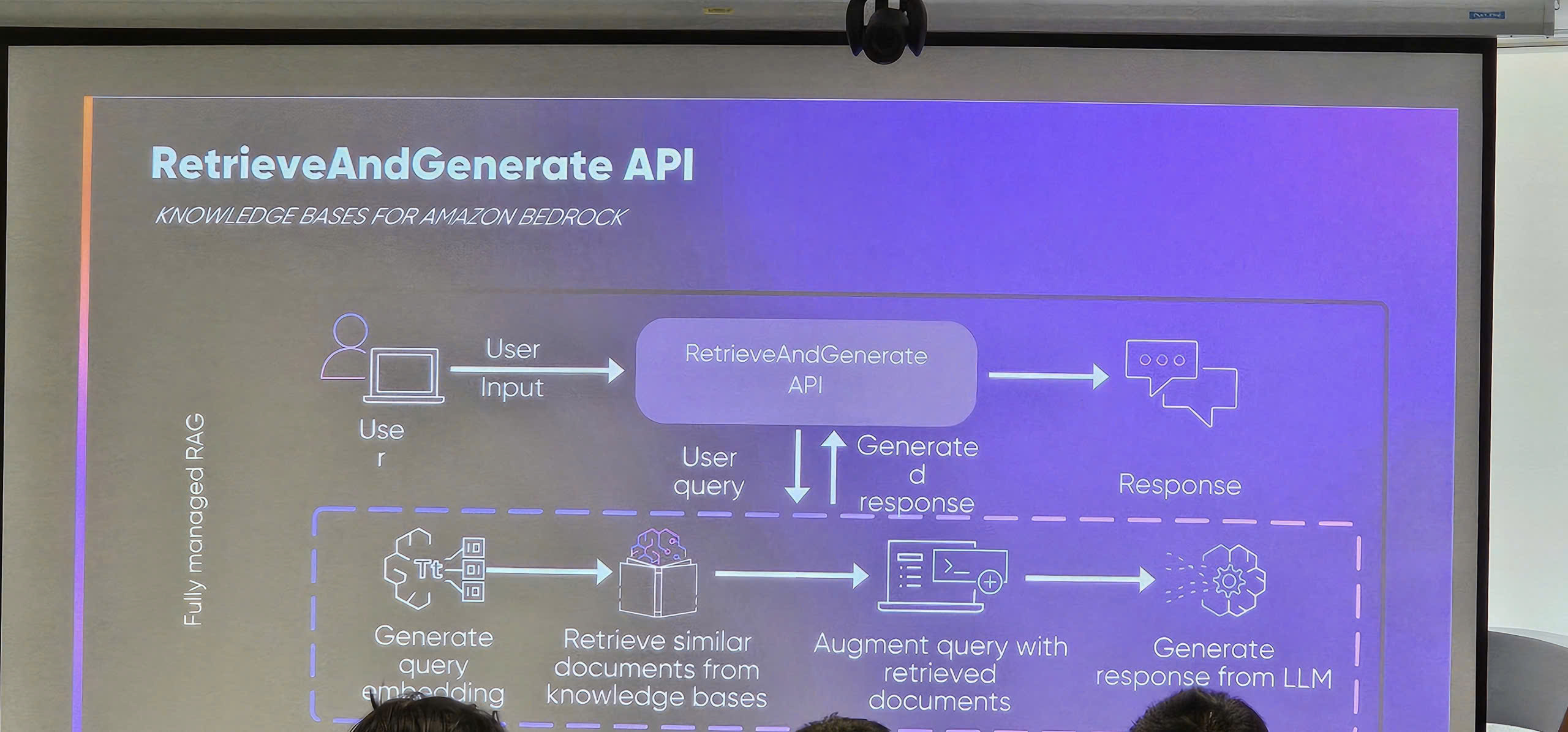

- Knowledge Base Integration:

- Amazon Bedrock Knowledge Bases

- Amazon S3 for storing documents and knowledge base data

- Connecting to data sources (S3 buckets, databases, APIs)

- Chunking strategies and metadata management

- S3 bucket policies and access control for secure data storage

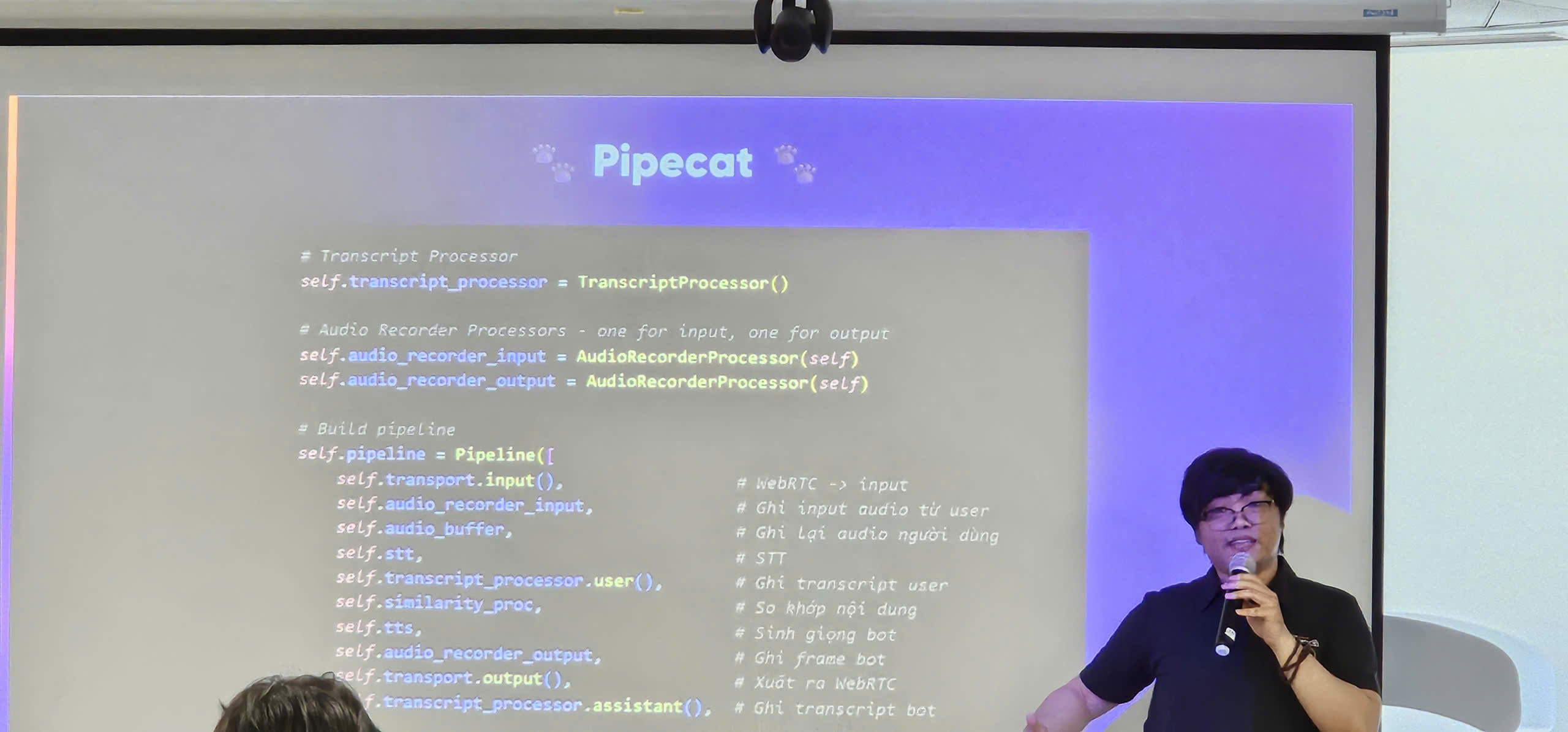

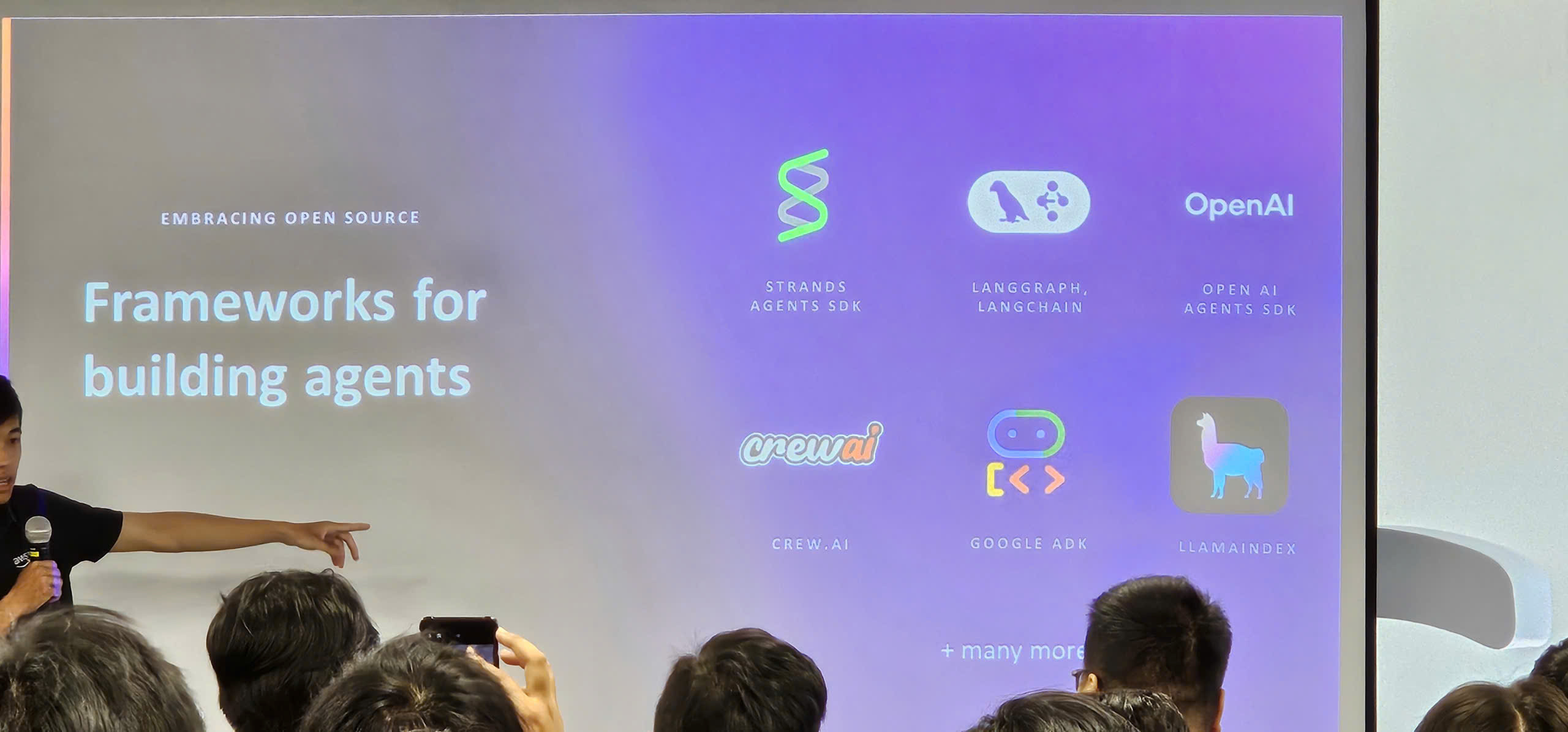

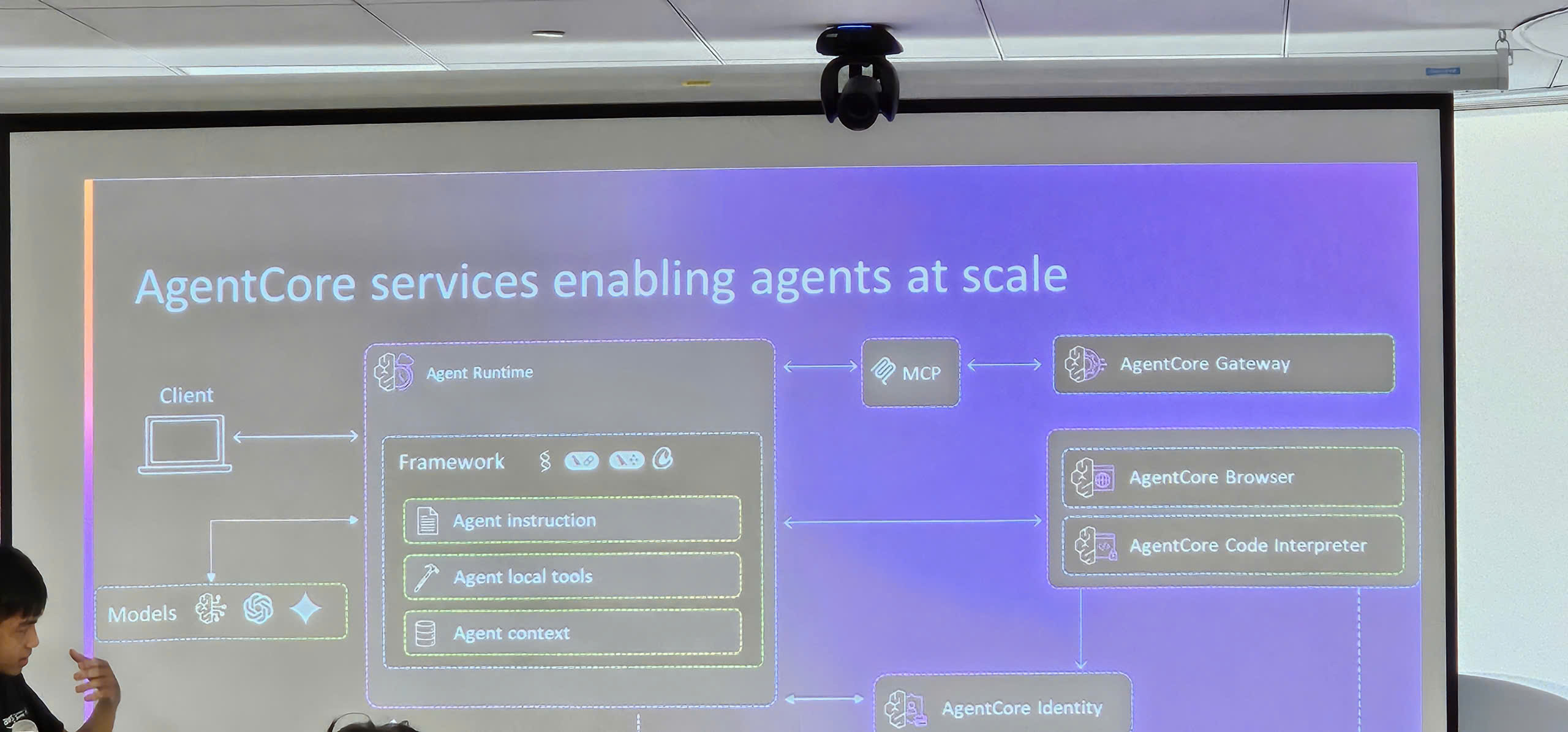

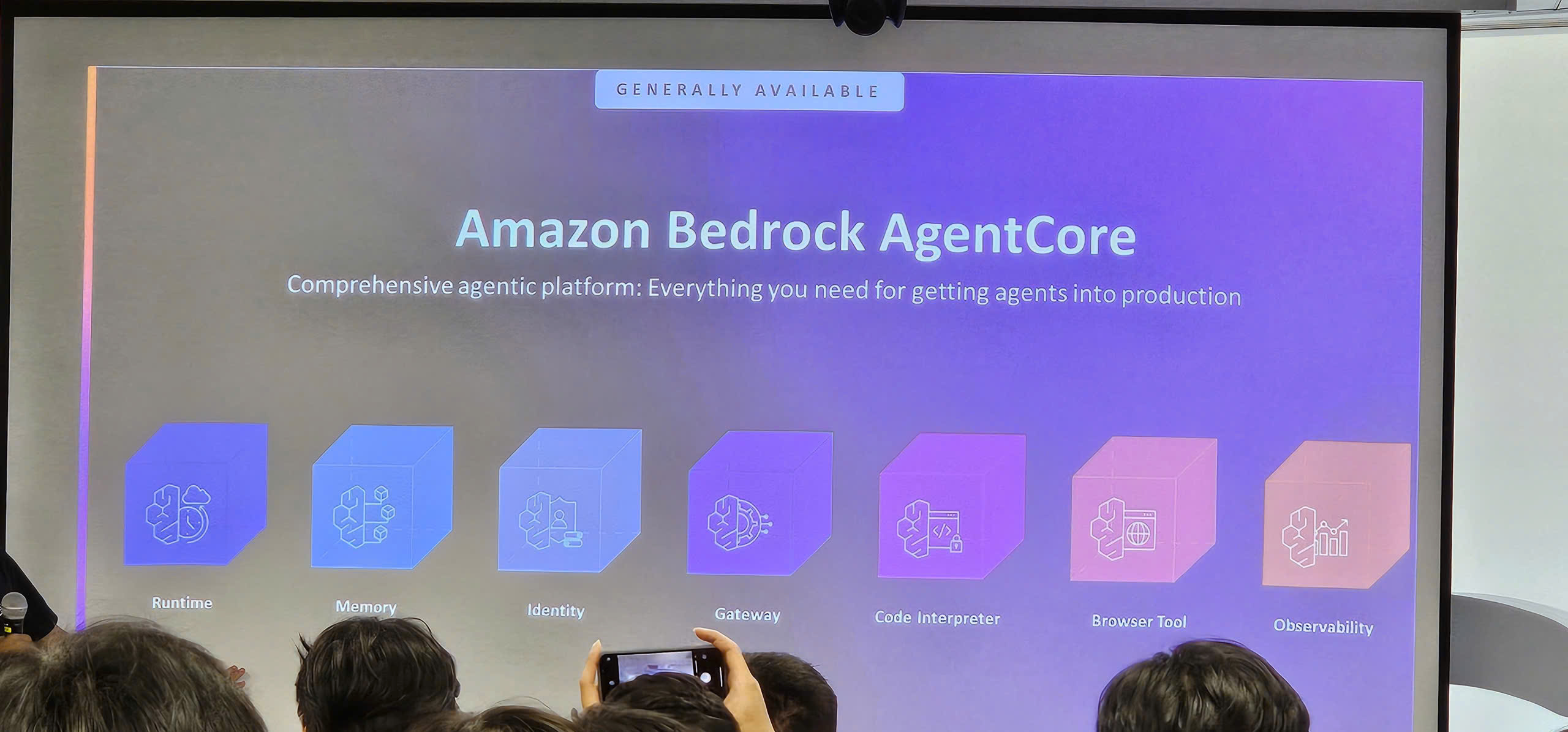

Amazon Bedrock Agent Core

- Agent Orchestration:

- Bedrock Agent Core for building autonomous AI agents

- Multi-step reasoning and task planning

- Action groups and API integrations

- Memory and conversation state management

- Tool Integrations:

- AWS Lambda functions as tools for custom business logic

- Lambda integration for real-time data processing

- External API connections via Lambda

- Database queries and data retrieval through Lambda

- Serverless architecture benefits with Lambda + Bedrock

Guardrails: Safety and Content Filtering

- Content moderation and toxicity detection

- PII (Personally Identifiable Information) filtering

- Topic-based filtering and denied topics

- Custom guardrails for business requirements

Live Demo: Building a Generative AI Chatbot using Bedrock

- Setting up Bedrock foundation model access

- Creating a simple chatbot with prompt engineering

- Implementing RAG with Knowledge Bases

- Adding guardrails for safe responses

- Testing and iterating on the chatbot

Key Takeaways

Amazon SageMaker Capabilities

- End-to-End ML Platform: SageMaker provides all tools needed from data preparation to model deployment

- MLOps Integration: Built-in capabilities for automating and monitoring ML workflows

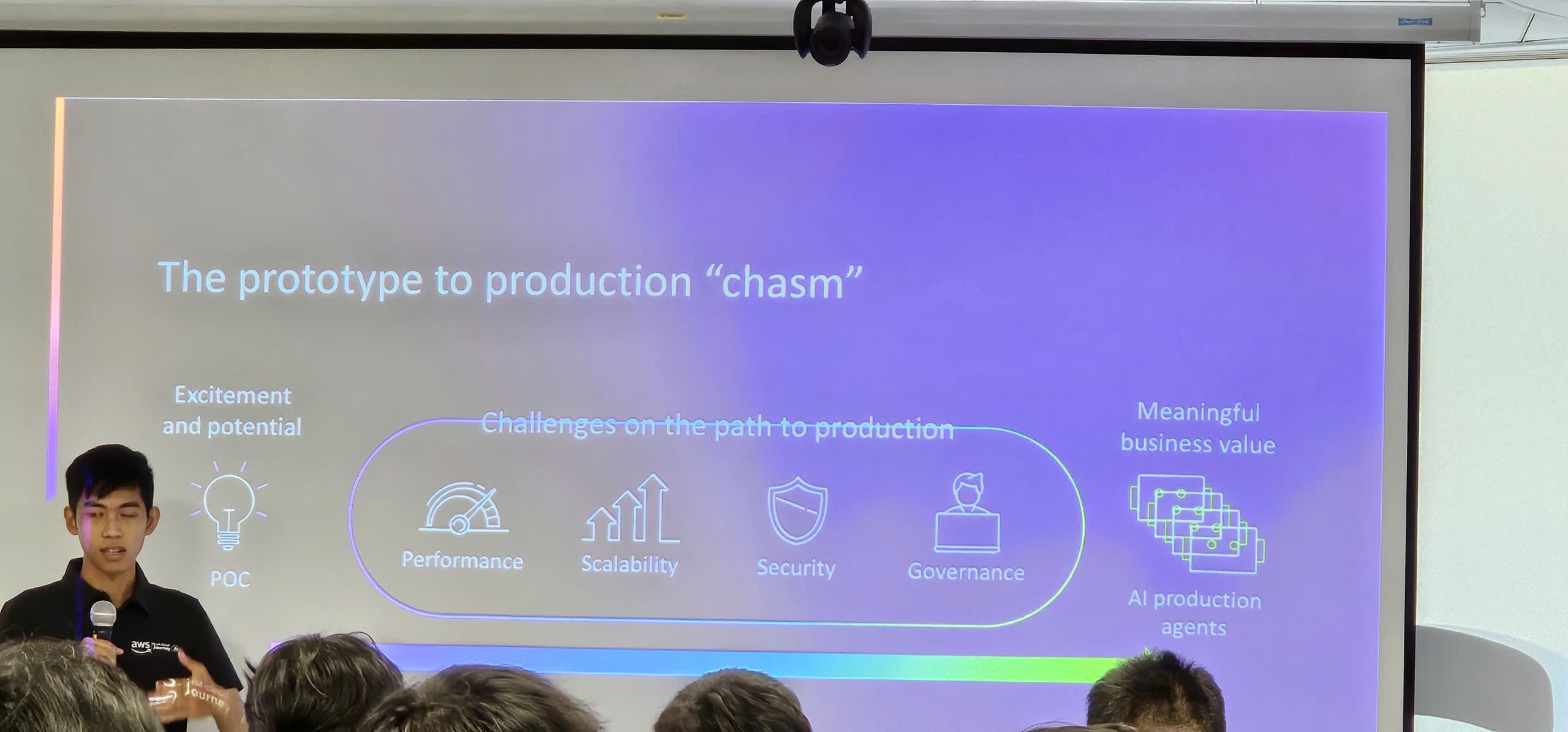

- Scalability: Easily scale from experimentation to production workloads

- Cost Optimization: Pay-as-you-go pricing with options for spot instances and serverless inference

Generative AI with Bedrock

- Model Diversity: Access to multiple foundation models without managing infrastructure

- Prompt Engineering: Critical skill for getting quality outputs from LLMs

- RAG Architecture: Combines the power of LLMs with your proprietary data

- Safety First: Guardrails ensure responsible AI deployment

- Agent Capabilities: Enable complex, multi-step AI workflows

Practical Implementation

- Start with Use Cases: Identify specific business problems AI/ML can solve

- Experiment and Iterate: Use SageMaker Studio for rapid prototyping

- Leverage Pre-built Models: Start with foundation models before custom training

- Implement Guardrails: Always prioritize safety and compliance

- Monitor and Optimize: Continuously track model performance and costs

Applying to Work

- Explore SageMaker: Start with SageMaker Studio free tier to experiment with ML workflows

- Build RAG Applications: Implement knowledge base integration for domain-specific chatbots

- Practice Prompt Engineering: Develop effective prompting strategies for your use cases

- Implement MLOps: Automate ML pipelines using SageMaker Pipelines

- Deploy Bedrock Agents: Create intelligent agents for automating business processes

- Ensure Compliance: Use Bedrock Guardrails to meet regulatory requirements

- Share Knowledge: Document learnings and best practices with your team

Event Experience

Attending the “AI/ML/GenAI on AWS Workshop” at AWS Vietnam Office was an immersive learning experience that provided hands-on exposure to cutting-edge AI/ML technologies. Key experiences included:

Learning from AWS Experts

- AWS Solutions Architects provided comprehensive insights into SageMaker’s end-to-end ML capabilities

- AWS GenAI Specialists demonstrated practical applications of Amazon Bedrock and foundation models

- Real-world use cases illustrated how Vietnamese companies are leveraging AWS AI/ML services

- Expert guidance on choosing the right tools and models for specific business needs

Hands-on Demonstrations

- Witnessed SageMaker Studio in action, from data preparation to model deployment

- Saw how Amazon Bedrock enables rapid development of GenAI applications without infrastructure management

- Learned practical prompt engineering techniques that immediately improve LLM outputs

- Explored RAG architecture for building knowledge-aware AI applications

- Understood how Bedrock Agents orchestrate complex multi-step workflows

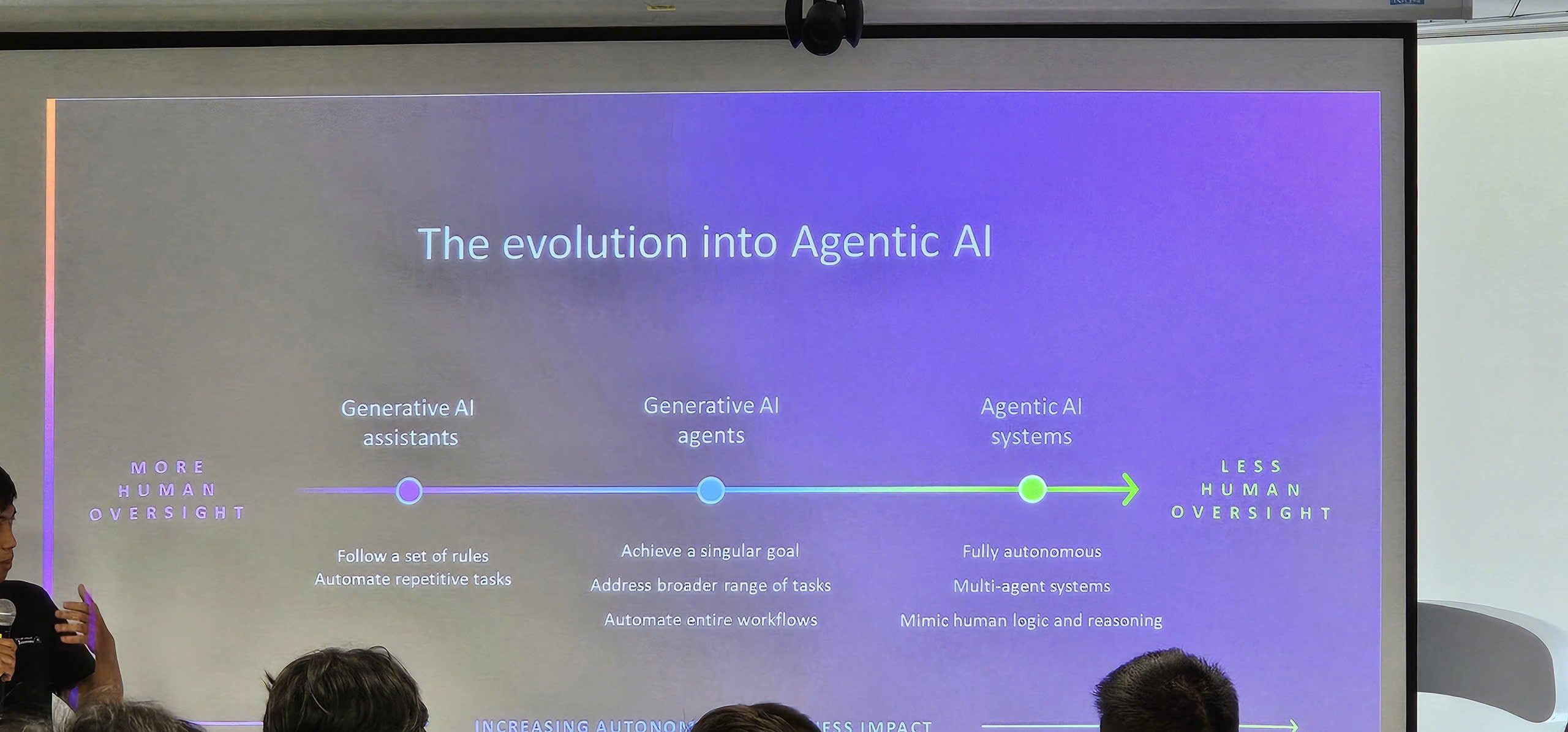

Understanding AI/ML Landscape

- Gained insights into the AI/ML adoption trends in Vietnam

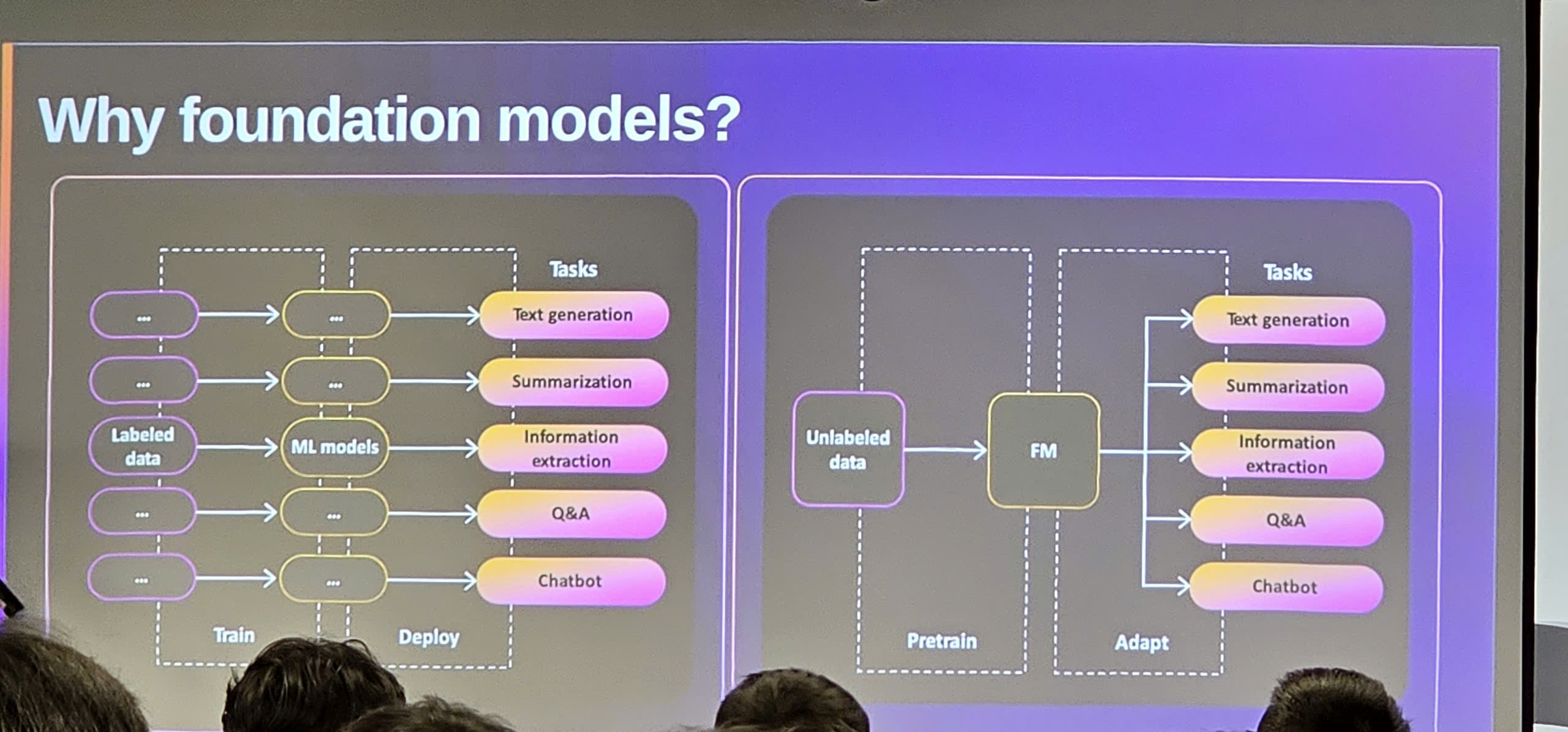

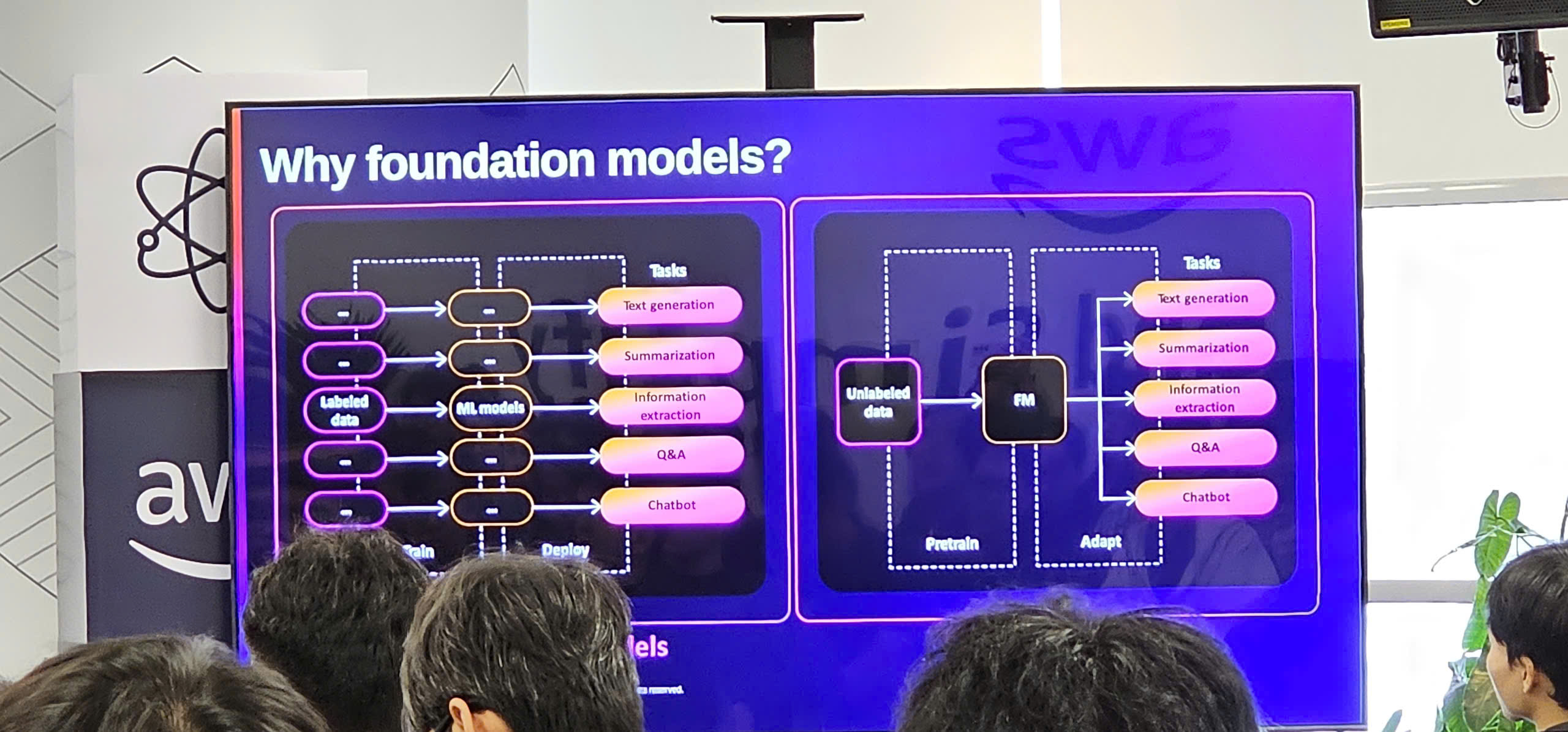

- Learned about the differences between traditional ML and Generative AI approaches

- Understood when to use SageMaker vs Bedrock for different use cases

- Discovered the importance of MLOps for production ML systems

Networking and Community Building

- Connected with fellow developers and data scientists exploring AWS AI/ML services

- Exchanged ideas about practical AI/ML implementation challenges and solutions

- Built relationships with AWS experts for ongoing support and guidance

- Joined the AWS AI/ML community for continuous learning

Practical Insights Gained

- Foundation models democratize access to powerful AI capabilities without requiring massive resources

- Prompt engineering is a critical skill that significantly impacts GenAI application quality

- RAG architecture solves the problem of LLMs lacking domain-specific knowledge

- Guardrails are essential for responsible and compliant AI deployment

- SageMaker provides a complete platform that accelerates ML development and deployment

Next Steps

- Begin experimenting with SageMaker Studio using the free tier

- Build a proof-of-concept RAG application using Bedrock Knowledge Bases

- Practice prompt engineering techniques on different foundation models

- Explore Bedrock Agents for automating business workflows

- Implement MLOps practices using SageMaker Pipelines

- Continue engaging with the AWS AI/ML community for ongoing learning

Event Pictures

Overall, this workshop provided a comprehensive introduction to AWS AI/ML services, from traditional machine learning with SageMaker to cutting-edge Generative AI with Bedrock. The hands-on demonstrations and expert guidance made complex concepts accessible and immediately applicable. The key takeaway is that AWS provides a complete ecosystem for building, deploying, and scaling AI/ML applications, making it easier than ever to bring AI innovations to production.